AI-Generated Passwords Are Easier to Crack Than They Appear

Generative AI systems can write software, draft legal documents, summarize research papers, and assist with complex data analysis. But when it comes to password generation, relying on large language models (LLMs) may introduce a structural weakness that is not immediately visible.

Recent security testing indicates that AI-generated passwords frequently follow predictable internal patterns. Although they often pass standard password strength meters, their effective entropy can be dramatically lower than expected. In real-world attack scenarios, that difference can determine whether a password withstands automated cracking attempts—or falls within hours.

Why Language Models Struggle With True Randomness

Modern AI chat systems developed by companies such as OpenAI, Anthropic, and Google are designed to generate statistically probable outputs. They operate by predicting the most likely next token in a sequence based on learned probability distributions.

That optimization goal directly conflicts with cryptographic requirements.

Password security depends on unpredictability. Language models are optimized for predictability.

When prompted to create a “strong 16-character password with uppercase, lowercase, numbers, and special characters,” the model does not generate cryptographic randomness. Instead, it constructs a sequence that satisfies structural constraints while remaining statistically coherent according to its training patterns.

The result may look complex, but complexity is not the same as entropy.

Structural Patterns Observed In AI-Generated Passwords

In controlled testing environments, researchers requested multiple 16-character passwords from leading AI systems under identical prompts requiring character diversity and symbol inclusion.

Although outputs passed common online strength checks, deeper statistical analysis revealed clustering behavior:

-

Repeated preference for certain starting letters

-

Frequent use of specific digits in early positions

-

Overrepresentation of particular symbols

-

Underutilization of large portions of the ASCII character set

-

Similar uppercase–lowercase sequencing patterns

Instead of uniform distribution across possible characters, passwords converged toward internal templates.

Even small structural biases significantly reduce the effective search space available to attackers.

Understanding Password Entropy

Password entropy is measured in bits and represents the size of the total possible search space.

If:

L = password length

N = number of possible characters

Then entropy is calculated as:

Entropy (bits) = log2(N^L)

This can also be written as:

Entropy (bits) = L * log2(N)

Example:

If a password is 16 characters long

And it uses 94 printable ASCII characters

Then:

Entropy = 16 * log2(94)

Since:

log2(94) ≈ 6.5546

The result becomes:

Entropy ≈ 16 * 6.5546

Entropy ≈ 104.87 bits

Rounded:

Entropy ≈ 105 bits

That level of entropy corresponds to a truly random 16-character password drawn uniformly from the full ASCII printable set.

What Happens When Predictability Reduces Entropy

If structural bias reduces effective randomness, entropy collapses.

For example:

20 bits of entropy equals:

2^20 = 1,048,576 combinations

27 bits of entropy equals:

2^27 = 134,217,728 combinations

Modern GPU-based cracking systems can attempt billions of guesses per second. Under such conditions:

-

20-bit entropy can be exhausted in seconds

-

27-bit entropy can be exhausted in minutes or hours

Even if the password appears visually complex, predictable structure allows attackers to prioritize high-probability combinations first.

Why Online Password Checkers Can Be Misleading

Most password strength tools evaluate:

-

Length

-

Character category diversity

-

Absence of dictionary words

-

Presence of symbols

They rarely analyze probabilistic distribution patterns.

If an AI model consistently:

-

Starts with an uppercase letter

-

Places a digit in position two

-

Prefers specific symbols such as # or $

-

Avoids certain letters

Attackers can build optimized attack dictionaries that test likely combinations first.

Instead of brute-forcing 94^16 possibilities, they narrow the search space using statistical assumptions. This dramatically reduces cracking time.

The Predictability Paradox

Dario Amodei, CEO of Anthropic, has previously noted that large language models are optimized to produce high-probability outputs. This architectural property cannot be eliminated simply by writing better prompts.

LLMs are not cryptographically secure random number generators.

True randomness in password systems typically relies on:

-

Cryptographically secure pseudorandom number generators (CSPRNGs)

-

High-entropy system sources

-

Hardware randomness extraction

-

Deterministic algorithms seeded with unpredictable input

Without integration of these mechanisms, conversational AI remains structurally unsuited for secure secret generation.

Why Visual Complexity Is Not Security

An AI-generated password might look like this:

Uppercase letter + digit + mixed-case string + symbol

To a human user, this appears strong. To a basic validation algorithm, it meets complexity requirements.

But if the model systematically favors certain letters or digit placements, the effective N value shrinks.

If instead of 94 characters the model effectively uses only 30 frequently selected characters, then:

Entropy = 16 * log2(30)

Since:

log2(30) ≈ 4.91

Entropy ≈ 16 * 4.91

Entropy ≈ 78.56 bits

And if positional bias further reduces unpredictability, entropy can fall dramatically—sometimes below 30 bits in extreme clustering scenarios.

The reduction is exponential, not linear.

Dedicated Password Managers As A Safer Alternative

Security professionals recommend using password managers that rely on cryptographically secure generation mechanisms rather than language models.

Established solutions include:

-

1Password

-

Bitwarden

These systems generate passwords using secure entropy sources and CSPRNG algorithms. They also provide:

-

Encrypted vault storage

-

Zero-knowledge architecture

-

Cross-device synchronization

-

Breach monitoring alerts

-

Secure autofill mechanisms

Some AI chat systems have begun warning users not to assign AI-generated passwords to sensitive accounts, particularly financial or identity-critical services. That precaution is justified.

AI-Assisted Attacks Against AI-Generated Passwords

A further complication emerges when attackers use machine learning tools to optimize cracking strategies. Neural-network-based password guessing models can detect structural regularities across generated datasets.

If AI tools systematically embed predictable features in generated passwords, adversarial AI can learn those biases.

In such a scenario, AI-generated passwords could become disproportionately vulnerable to AI-assisted cracking systems.

When Could AI Safely Generate Passwords?

AI could safely assist password generation only if:

-

It delegates randomness to a secure backend CSPRNG

-

The model acts merely as an interface layer

-

The entropy source is cryptographically validated

In that architecture, the AI does not “compose” the password. It retrieves securely generated output from a dedicated cryptographic module.

Without that separation, the statistical nature of language modeling remains incompatible with strong secret generation.

Practical Security Recommendations

For individuals:

-

Use a reputable password manager

-

Enable multi-factor authentication (MFA)

-

Avoid password reuse across platforms

-

Prefer randomly generated credentials over memorable constructions

-

Avoid generating passwords in public chat interfaces

For organizations:

-

Establish password manager policies

-

Enforce MFA or hardware-based authentication

-

Conduct credential hygiene audits

-

Educate employees about AI-related security misconceptions

-

Monitor for leaked or reused credentials

The Broader Security Implication

Generative AI tools increasingly mediate everyday workflows. As trust in AI assistance grows, users may outsource not only creative tasks but also security-critical decisions.

However, password generation is not a linguistic task. It is a cryptographic task.

AI models excel at producing coherent, high-probability sequences. Password security demands the opposite: low-probability unpredictability.

Until generative systems integrate true cryptographic randomness by design, AI-generated passwords should be treated as convenience suggestions—not as secure authentication credentials.

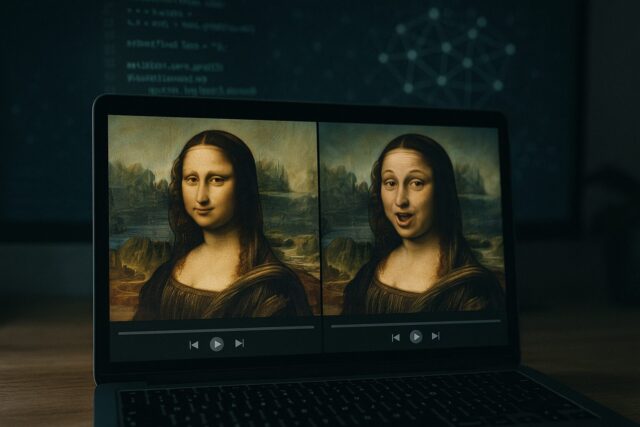

Image(s) used in this article are either AI-generated or sourced from royalty-free platforms like Pixabay or Pexels.

This article may contain affiliate links. If you purchase through these links, we may earn a commission at no extra cost to you. This helps support our independent testing and content creation.